# Name of handler to read task instance logs. # Turn unit test mode on (overwrites many configuration options with test # If set to False enables some unsecure features like Charts and Ad Hoc Queries. # What security module to use (for example kerberos): # Can be used to de-elevate a sudo user running Airflow when executing tasks # If set, tasks without a `run_as_user` argument will be run with this user # The class to use for running task instances in a subprocess # How long before timing out a python file import while filling the DagBag # Secret key to save connection passwords in the db Plugins_folder = /usr/local/airflow/plugins # get started, but you probably want to set this to False in a production # Whether to load the examples that ship with Airflow. # The maximum number of active DAG runs per DAG # whose size is guided by this config element # When not using pools, tasks are run in the "default pool", # The number of task instances allowed to run concurrently by the scheduler # the max number of task instances that should run simultaneously # The amount of parallelism as a setting to the executor. # How many seconds to retry re-establishing a DB connection after # a lower config value will allow the system to recover faster. If the number of DB connections is ever exceeded, # can be idle in the pool before it is invalidated. # The SqlAlchemy pool recycle is the number of seconds a connection # The SqlAlchemy pool size is the maximum number of database connections Sql_alchemy_conn = If SqlAlchemy should pool database connections. # SqlAlchemy supports many different database engine, more information # The SqlAlchemy connection string to the metadata database.

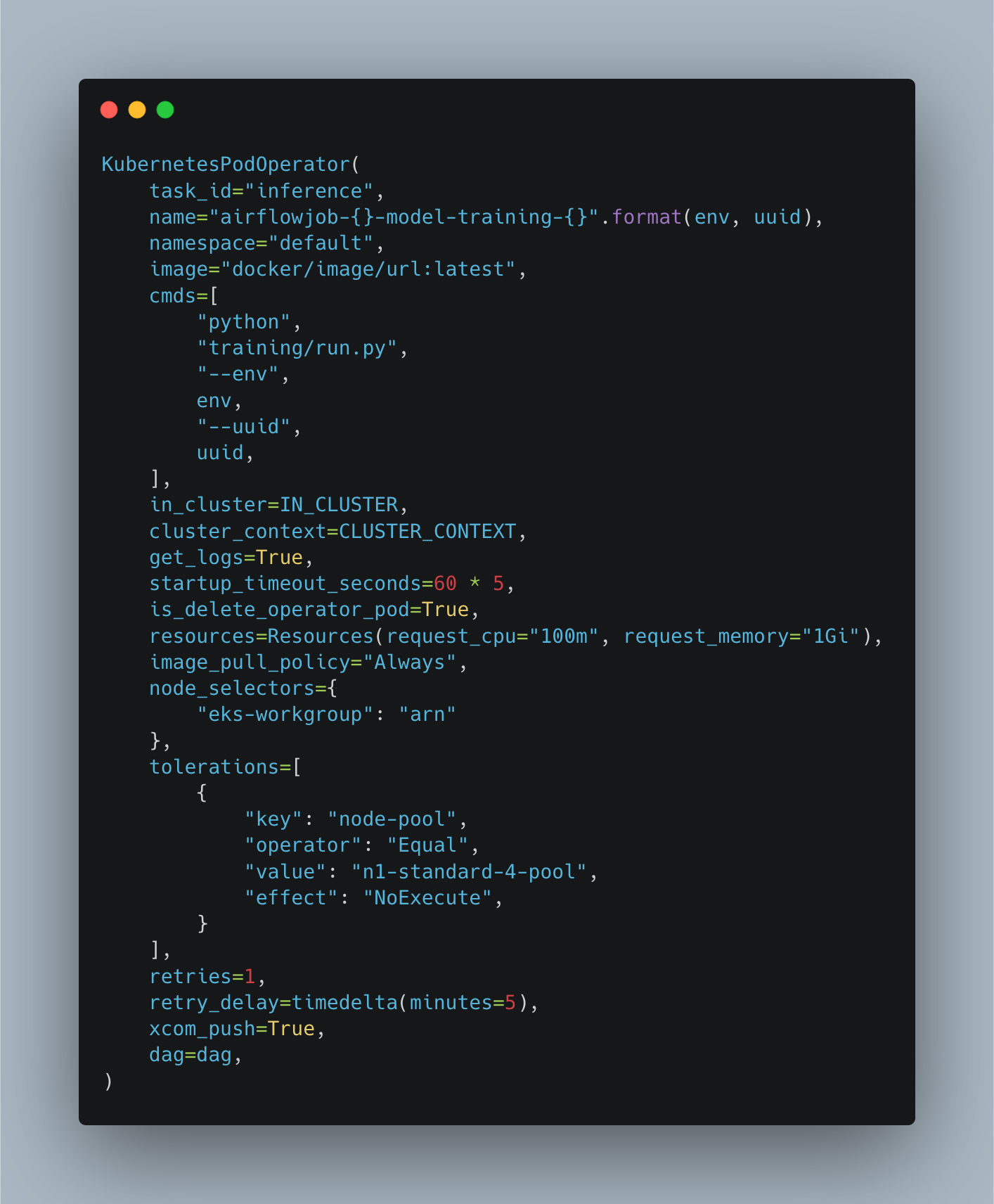

# SequentialExecutor, LocalExecutor, CeleryExecutor, DaskExecutor # The executor class that airflow should use. # can be utc (default), system, or any IANA timezone string (e.g. # Default timezone in case supplied date times are naive # Hostname by providing a path to a callable, which will resolve the hostname Passing = KubernetesPodOperator(namespace='airflow',įailing = KubernetesPodOperator(namespace='airflow',Ĭopy the file into the airflow k8s persistent volume: kubectl get pods -n airflow -o jsonpath=".log Start = DummyOperator(task_id='run_this_first', dag=dag)

'kubernetes_sample', default_args=default_args, schedule_interval=timedelta(minutes=10)) View the logs for the individual pods to know when they're up ( kubectl logs -f ) Load the sample airflow DAGĬopy the sample airflow dag from into a file named k8s-sample.py from airflow import DAGįrom _pod_operator import KubernetesPodOperatorįrom _operator import DummyOperator Note: The various airflow containers will take a few minutes until their fully operable, even if the kubectl status is RUNNING. Helm install -namespace "airflow" -name "airflow" -f airflow.yaml ~/src/charts/incubator/airflow/ This file sets the DB connection string ( sql_alchemy_conn = This is configurable via an enviroment variable in airflow's current master branch, but not in the 1.10 release. Helm dependency build ~/src/charts/incubator/airflow/Ĭopy the configmap-airflow-worker.yaml file attached below to. Helm installed and initialized in the minikube instanceĮdit the Dockerfile and add a line in the RUN command to install the kubernetes python packageĬonfigure docker to execute within the minikube VMīuild the and tag the image within the minikube VMĭocker build -t airflow-docker-local:1 Install the helm airflow chartĬreate a airflow.yaml helm config file: airflow:ĪIRFLOW_KUBERNETES_WORKER_CONTAINER_REPOSITORY: airflow-docker-localĪIRFLOW_KUBERNETES_WORKER_CONTAINER_TAG: 1ĪIRFLOW_KUBERNETES_WORKER_CONTAINER_IMAGE_PULL_POLICY: NeverĪIRFLOW_KUBERNETES_WORKER_SERVICE_ACCOUNT_NAME: airflowĪIRFLOW_KUBERNETES_DAGS_VOLUME_CLAIM: airflowįetch the helm dependencies for the airflow chart.This guide works with the airflow 1.10 release, however will likely break or have unnecessary extra steps in future releases (based on recent changes to the k8s related files in the airflow source). The steps below bootstrap an instance of airflow, configured to use the kubernetes airflow executor, working within a minikube cluster.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed